Why Infra Pentests Suck

Let's call him Marco.

We were both at the same consultancy, a few years into pentesting, stuck on site together at a client. I was mid-level, still figuring shit out learning the ropes, while he was senior. Italian, slim, quiet guy who would sit in the corner with his headphones in and not say a word until he casually dropped a JNDI lookup via LDAP on something the technical POC didn't even know had a Java backend. He'd grab every single JAR from the backend, or if the source wasn't available, ask the project team to provide these to him, preferred testing using curl to Burp, then write his own client and chain together a custom gadget to pop the thing. I had serious respect for him. Still do.

One evening we're packing up and he mentions his next test is an infra gig. I say I don't mind those. He looks at me like I've just said something profoundly stupid and goes: "Infra sucks."

Not gonna lie, that did trigger me. At that point I already had over twenty years in IT - school networks back in the early 2000s, Windows and Linux sysadmin, data centres, SRE, and DevOps. I came into offensive security from the infrastructure side, and that was the thing I was actually good at and proud of.

Several years later? Yeah, infra does suck. But not because the work is boring. It sucks because the industry has turned a glorified vulnerability scan into something it calls a penetration test with better branding, because the system is rigged, and because compliance-driven testing and risk-based testing are fundamentally incompatible.

Throughout my chaotic pentesting career, I've tested firewalls, routers, security appliances blinking boxes that senior management burned money on to keep themselves warm and cosy. The one in this story is a classic backup migration virtual appliance with a web interface, SSH, restricted CLI, a handful of unknown services and ports, sitting in a privileged network zone because it needs connectivity to backup infrastructure, with a separate recorder component slapped straight onto the production backup servers. Consultancies have their own version of this story, but that's a different post. What follows is how this kind of thing typically gets "tested" at your compliance-driven corp, and what actually happens when you do it properly.

Five Days at Compliance Corp [1]

If you've worked in an enterprise pentest programme, what follows will look familiar. Here's how an appliance like this typically gets tested.

Scoping

A short Teams call run by (non-)technical project managers from the wider security team. They have a questionnaire, a form, and a process. There is no pentester on this call. Why would there be?

Web UI? Tick: "web application." SSH access? Tick: "infrastructure." Number of pages? "Less than 30." Restricted shell access? "Restricted command line interface, not really testable." The SOW is signed for five days of web app plus infrastructure testing.

Day 1

I start by hunting for a pentest jumpbox. I need GUI for Burp, so I'm sticking to the Windows ones. Windows servers have multiple VLANs, for regular VM networks and one on the dedicated scanner network, and needs manual static routes across data centres just to reach the target estate. Word of mouth has it that someone on a previous engagement scanned too aggressively and knocked over a pair of legacy firewalls, so there are rules now about which ranges I can scan and which subnets I'm not allowed to touch.

Nessus plugins are two months old. No internet on jumpboxes, so no automatic updates. I try to install Burp, but EDR blocks it. Tools have to go in a specific whitelisted path to get past the EDR, and it looks like the SOC removed the exclusions again. I raise a ticket, which goes into a queue. There's also the Npcap versus WinPcap conflict to sort out before anything else; Nmap ships with Npcap, Nessus needs WinPcap, and having both installed on the same jumpbox means one works and the other doesn't. Meanwhile, I pull tools manually from a shared drive, drag Nessus plugins across, start setting static routes so the scanners can reach the target directly.

This takes all morning, between the coffee breaks and lunch.

By end of day I have a scanner that can see the appliance and tools that are allowed to run, but I haven't tested anything or even looked at the appliance yet. The environment is ready though, and tomorrow we start for real. Productive day.

Day 2

Authenticated scanning is the next step because Nessus with credentials can give you missing patches. I try SSH and the appliance drops me into a restricted shell with hundreds of commands. A few bypass attempts from Hacktricks go nowhere, so I move on because there's no time to waste on this. The scoping documents and notes don't explain much about the appliance's internals.

The internal Pentest Runbook 2.0 has been "under review" since forever and contains wisdom like "if Nessus fails, try Qualys" and "ensure scanner appliance is in the correct network zone" without specifying which of the several VA scanner appliances it might be. I spend an hour figuring out which one can reach my target, get it pointed at the appliance, configure a scan policy from the limited templates available because I can't create custom ones, and kick it off. I set Nmap running a full TCP and UDP scan in the background as well because you can never have too much coverage.

Both scanners end up unauthenticated because I can't get credentialed scanning to work on either. By five o'clock I have results: missing security headers, broken cipher suites, and a self-signed certificate on an internal backup appliance that talks to every production backup set in the environment. Pat on the shoulder.

Day 3

I spend the morning actually testing the thing. I google the product to death, then run Burp against the web interface. Insecure cookies, a default admin account, no MFA. Findings are building up nicely.

Now that I have working admin credentials I want to run a Burp active scan, but there's a problem. It would blindly fill in every form and perform every action as admin, which could easily cause a denial of service condition or alter settings in ways that would be difficult to revert. The appliance is technically pre-production, but the backup team are actively using it for migration testing and treating it as production, so nobody set up a separate test instance. I flag it to the project manager who says no, too risky, light-touch only.

I ask ChatGPT to generate some findings based on my notes, scans output and whatever I could find online about the product. The severity ratings are on the high side and don't quite align with CVSS scoring. I tweak a couple downward, leave the rest. The report needs substance.

1. Vendor-Shipped Default Credentials - medium

Default admin password was changed prior to deployment, but vendor ships

with documented defaults. Recommend unique per-device credentials per

UK PSTI Act 2024.

2. Single Privileged Admin Account - high

No principle of least privilege, no MFA, no audit trail

3. Multiple Open Ports - high

Nmap identified 12 open TCP ports including SSH (22), HTTPS (443),

SMB (445), and custom service on 55560. Attack surface should be

minimised per CIS benchmarks.

4. Antivirus Disabled During Migrations - critical

Malware deployment opportunity, ransomware risk

5. Self-Signed Certificate - medium

Man-in-the-middle attack vector, credential interception

6. Insecure Session Cookies - medium

Session hijacking, unauthorised access to admin interface

7. Missing Security Headers - medium

Clickjacking, content injection, cross-site scripting

8. Broken Cipher Suites - medium

Weak encryption, traffic interception

9. No Multi-Factor Authentication - high

Single-factor authentication on admin interface, credential stuffing risk

10. Reflected Input in Web Interface - medium

User input reflected in HTTP responses, potential cross-site scripting

Day 4

Still poking at the web interface and I intercept a request in Burp where the API takes a job parameter. I modify it to $(sleep 5) and the response takes five seconds. Command injection. I can barely contain myself.

I try to get a reverse shell but bash, netcat, and Python all fail to connect back on every port I try including 443, 53, and 8080. Something between me and the appliance is dropping my payloads. Spending hours going in circles with shell and protocol obfuscation techniques, bind shells, etc. before accepting that nothing is going to work from this network position.

Taking the rest of the day to polish the write-up: HTTP request and response screenshots, the sleep evidence, the ICMP callback, a clean write-up of the attack flow. This is a high severity finding on a backup management appliance with a single privileged admin account. I join the weekly team call to walk everyone through my methodology for fifteen minutes, the obstacles I overcame, the trials by fire, and how I persevered where a lesser tester would have given up. Someone says "trials overcome" and I make a note for my end-of-year appraisal and 1337-tester-of-the-month nomination.

Day 5

Reporting. I pull together the ChatGPT findings, the scan results, and the command injection. The list looks solid: the command injection is a high, SSLv3 and TLS 1.0 still enabled are mediums, weak SSH&TLS cipher suites, self-signed certificate, vendor-shipped default credentials that could be a risk if not changed, no MFA, insecure cookies - all medium followed by a few lows and best practice. Twenty-plus findings and a good spread of severities. The report goes into QA and the PDF is delivered.

Kebab and pastel de nata on the way home. What a week.

Post-engagement

The project team aren't happy with the findings for some reason. I hear through the grapevine that the sysadmins are complaining they can't restrict SSL ciphers or change cipher suites on a vendor-shipped appliance because it voids the support agreement, but that sounds like a "you problem" to me. The findings are valid and the evidence is in the report.

A few weeks later I notice the command injection has been quietly downgraded from high to medium. Compensating controls: the team already has admin access, so the additional risk is "marginal." Nobody asked me about it.

Eventually all findings are closed in the tracking system. The appliance was decommissioned in pre-prod. The production instance is still live, but that's a different asset.

Five Days Done Right

Same appliance, different organisation, risk-driven pentest.

Pre-engagement

Attack surface mapping and threat modelling attached to a JIRA ticket by my line manager, a seasoned red teamer, slowly converting into a spreadsheet warrior, with supply chain compromise flagged as a major risk: the appliance sits in a privileged network zone with direct connectivity to backup infrastructure, patches are distributed through a vendor portal, and a compromised image or tampered update would give an attacker access to the entire backup estate. Same class of attack as SolarWinds.

Scoping

We join the scoping call on Teams. Surprisingly, two vendor representatives have already joined because the backup team invited them to answer questions about backup set migrations, which turns out to be the best accident of the entire engagement. I use the vendor presence to clarify process and product details, and once I understand it's a virtual appliance deployed as an OVA, I ask for the image so I can deploy it in a lab for more meaningful and intrusive testing. There are minor objections from the internal team, but nothing that can't be overcome with a reasonable explanation of why testing a live appliance in production with kid gloves isn't testing at all.

Day 1

The NetBackup team had already deployed an instance for migration testing ahead of schedule. I notice that corporate laptops can hit its SSH and web interfaces when connected to VPN, which is either lax firewall rules or a systemic finding from a previous engagement that was never fixed. I let my manager know so he can chase it down, and move on.

I download the appliance image and the latest patch from the vendor-provided site and ask for integrity verification. The vendor responds via email with an MD5 checksum and instructions to "use md5sum command to generate a MD5 Checksum." MD5 has been broken since 2004, there are no digital signatures, and the checksums aren't published alongside the downloads. I note it down. By the end of the day the appliance is up and running on Proxmox in our test bed.

Day 2

Questions and requests go through a dedicated Teams chat with the PM, a technical POC, a security architecture rep, and my manager. While waiting for responses I watch the appliance boot in the Proxmox console and catch a glimpse of the console booting systemd units, which is a solid indicator that the appliance is running some sort of Linux underneath whatever the vendor's marketing material calls it.

I attach a Kali live image to the VM and boot from it. parted -l confirms two partitions: an encrypted LUKS volume on sda2 and an unencrypted /boot on sda1. I mount the boot partition, remove the GRUB password, note the hash for hashcat later, and add a systemd single user mode boot option. Reboot, detach the Kali ISO, and the appliance boots into single user mode with a read-only root filesystem. There are defensive measures in place that disable privileged accounts added to passwd by locking them out on reboot, but I bypass them before logging off and have a rooted appliance ready for testing.

Day 3

With root access I check /etc/passwd and find the SSH login user is restricted to a shell called CLISH. It's open source with code and documentation hosted on SourceForge, so I pull the schema and start reading. The input validation is defined in an XML file, and the String type that's used across most of the implementation relies on a single regex pattern: [^\-]+. It blocks hyphens and nothing else, so shell metacharacters sail straight through.

I confirm command injection via SSH and land as admin, a restricted local account despite the name. That's one CVE. I want the full chain to root, so I spend the rest of the day looking for a local privilege escalation and find one through the TapeDumper service. Two CVEs by end of day.

Day 4

The patching subsystem is next. Perl sources are obfuscated, wrappers being deleted post-execution, and there's a fair amount of anti-reversing in place. A lot of back and forth reading between the patch scripts, the web application, and strace output. By the time I wrap up I understand the full mechanism: patches are encrypted but not signed, with a static key shared across every patch and every installation; encryption isn't authentication.

The FTP service used for backup transfers runs its own "encryption" that turns out to be static XOR obfuscation with the vendor's copyright string as the key. Two more CVEs, four total, though I haven't built the proof-of-concept exploits yet.

Day 5

The full attack chain for the unsigned patch upload: decrypt a legitimate vendor patch, inject a backdoor, re-encrypt with the same static key, upload through the web interface, trigger it, root shell. I move on to the web application Flask source at /opt/SRLtzm/web/tranzman/views/ where the API endpoints pass user input to the shell with minimal sanitisation, and confirm a root reverse shell. Five CVEs. The lower findings, inadequate account security, SELinux in permissive mode, the observation notes, I log as I go. Report writing bleeds into after hours.

Post-engagement

Handover meeting with the project team. I walk through every finding, answer questions, and defend the severity ratings. The project manager engages directly with the vendor and shares our report within days. Go-live is halted until the issues are resolved. Within a few weeks the vendor produces patches and sends them back to us for retest; they've fixed high and medium findings in the new release version - nice. At the same time, we engage with Mitre and begin the responsible disclosure process.

Why This Keeps Happening

Same appliance tested twice. One engagement produced twenty-something findings that could have come from a VA scan, and the other produced five CVEs, a responsible disclosure, and vendor patches. The difference isn't talent, tools, or time. It's everything that happens before the tester opens a terminal.

Scoping

Across the industry, scoping is either a pre-sales function or a project management checkbox, staffed by people whose job is to estimate effort and produce a statement of work, not to understand what they're testing. The scoping questionnaire becomes the scope, and the scope becomes the ceiling. The tester inherits a scope that was negotiated between someone who can't connect the dots between what they're scoping and what a compromise would mean and someone who doesn't want to pay for more than five days.

Vulnerability assessment ≠ penetration testing

"pen in #pentest stands for penetration, not audit" - @kmkz_security

Laurent Desaulniers, in his 2022 Are PenTests Dead? session, said that roughly half the penetration tests in North America are compliance-driven, and the compliance half want to find as little as possible because every finding creates work for the auditors. A polite and diplomatic way of saying that a significant portion of what gets sold as penetration testing is vulnerability assessment with a different label on the invoice. A more recent study shows that 75% of infosec firms conduct penetration tests primarily to satisfy compliance requirements.

A quick Google search for "Vulnerability Analyst" with Burp and Nessus as required tools returns postings from major QSA and cybersecurity consultancies advertising the same scope: "conduct network and application vulnerability scans" and "manually verify vulnerabilities identified in scans."

Looks familiar? The penetration tester role has been quietly downgraded until it's indistinguishable from a vulnerability analyst. Same tools, same process, same output, different label on the SOW because that's what the compliance framework requires. As Jake Williams put it, "vulnerability scanning is an audit function. It is not 'proactive security.'"

The Compliance Corp tester wasn't incompetent. He was doing exactly the job he was hired to do: run scanners, document findings, produce a report. The problem is that what he was hired to do has nothing to do with what penetration testing is supposed to be. The difference between a vulnerability scan and a penetration test is the human factor, and the industry has spent the last decade systematically removing it to make the process cheaper, faster, and more predictable. To add insult to injury, Cobalt's 2025 State of Pentesting found that 48% of vulnerabilities are never remediated, the top vulnerability categories haven't changed in five years, and noticed in 2022 that 65% of organisations had at least one repeat critical finding in consecutive annual pentests. Testers are finding the same issues, writing the same remediation sections, for clients who never action them. This is why experienced pentesters silently leave, or pivot into management, research, or red teaming.

The compliance conflict

Here's the part that nobody wants to say out loud: compliance and penetration testing aren't just different activities with different goals - they're fundamentally incompatible. Compliance wants to minimise findings because each one becomes a risk register entry, an auditor conversation, a remediation ticket, and a potential blocker to go-live. Penetration testing exists to find and exploit as many vulnerabilities and configuration issues as possible in the time allocated, with focus on business impact. Running both through the same process is like asking your estate agent to also do the survey. The conflict exists across every compliance framework, but it's most structurally embedded in PCI DSS, where the economics of assessment create a direct incentive to minimise findings.

It starts with how assessors are paid. PCI Qualified Security Assessors are selected and paid directly by the organisations they certify, from a pool of around 390 globally listed companies. A QSA that fails a large client risks losing the engagement and the follow-on consulting work that typically accompanies it. Branden Williams, who managed a team of over 80 QSAs, described one of the gravest errors as "bowing to threats about the future" - clients telling their assessor "if you don't mark this as compliant, I am giving my business to someone else." Anitian documented it more bluntly: "We have observed organisations that are profoundly non-compliant get passing grades from other QSAs." They reported the extreme version: at multiple client engagements, the previous QSA had "spent a few days locked in a conference room, talked with nobody, looked at no systems, reviewed no configurations, and then delivered a signed Report on Compliance." In the programme's entire history, only one QSA company has ever been publicly decertified.

The irony is that in these environments, the pentest has become part of the paperwork, not part of the protection. What started as due care has been reduced to due diligence. Penetration testing was supposed to answer the question "can someone exploit this?" Instead it answers the question "can we prove we tested this?" Those are not the same question, and the gap between them is where breaches live.

Visa's former chief enterprise risk officer said it plainly in 2009: "No compromised entity has yet been found to be in compliance with PCI DSS at the time of a breach." The uncomfortable footnote is that Visa gets to declare non-compliance retroactively after a breach, which means the compliance certificate protects you right up until the moment you actually need it.

This isn't hypothetical. Between 2023 and 2026, every major edge appliance vendor - Ivanti, Palo Alto, Cisco, Fortinet, F5 - had critical zero-days exploited at scale against the exact class of device that compliance programmes list as "in scope." Exploits have been the number one initial infection vector in Mandiant's incident response data for five consecutive years, and in 2024 all four of the most exploited CVEs targeted edge infrastructure. The Verizon 2025 DBIR found that targeting of VPNs and internet-facing appliances rose eightfold in a single year, and only 54% of perimeter device vulnerabilities were fully remediated over twelve months. Mandiant's 2026 report measured the mean time-to-exploit at negative seven days - exploitation beginning, on average, before a patch even exists. The Dutch military intelligence service disclosed that Chinese state actors maintained persistent access to over 20,000 FortiGate appliances worldwide using malware that survived firmware upgrades - meaning organisations that patched, fulfilling their compliance obligations, remained compromised. The appliances were in scope. The compliance programmes produced passing assessments. The devices were still owned.

Certifications

This deserves its own discussion because the cert ecosystem feeds directly into the pipeline that produces Compliance Corp testers, but it's complicated because the certs themselves aren't the problem. OSCP taught a generation of pentesters how to think offensively. I'm still proud of mine from 2014; I started with Penetration Testing with BackTrack and they upgraded the course to Penetration Testing with Kali Linux mid-way through. IRC support, shared labs, $60 retakes. Genuinely good value for money.

Twelve years on, the same cert has become a hiring filter rather than a competence signal, and the consultancies that hire based on certs are the same consultancies that scope by questionnaire and bill VA work as pentesting.

Silent Signal's recruitment data tells the rest of the story: two thirds of OSCP holders who submitted a report during their recruitment challenge couldn't exploit a reflected XSS with a filter bypass and an SQL injection with Base64 encoding in a Flask app. These aren't juniors. These are certified, experienced professionals at international companies who can't get past a regex filter. The cert says they can, but the evidence says they can't (nothing $2-3k spent on another "web expert" offsec certificate wouldn't fix).

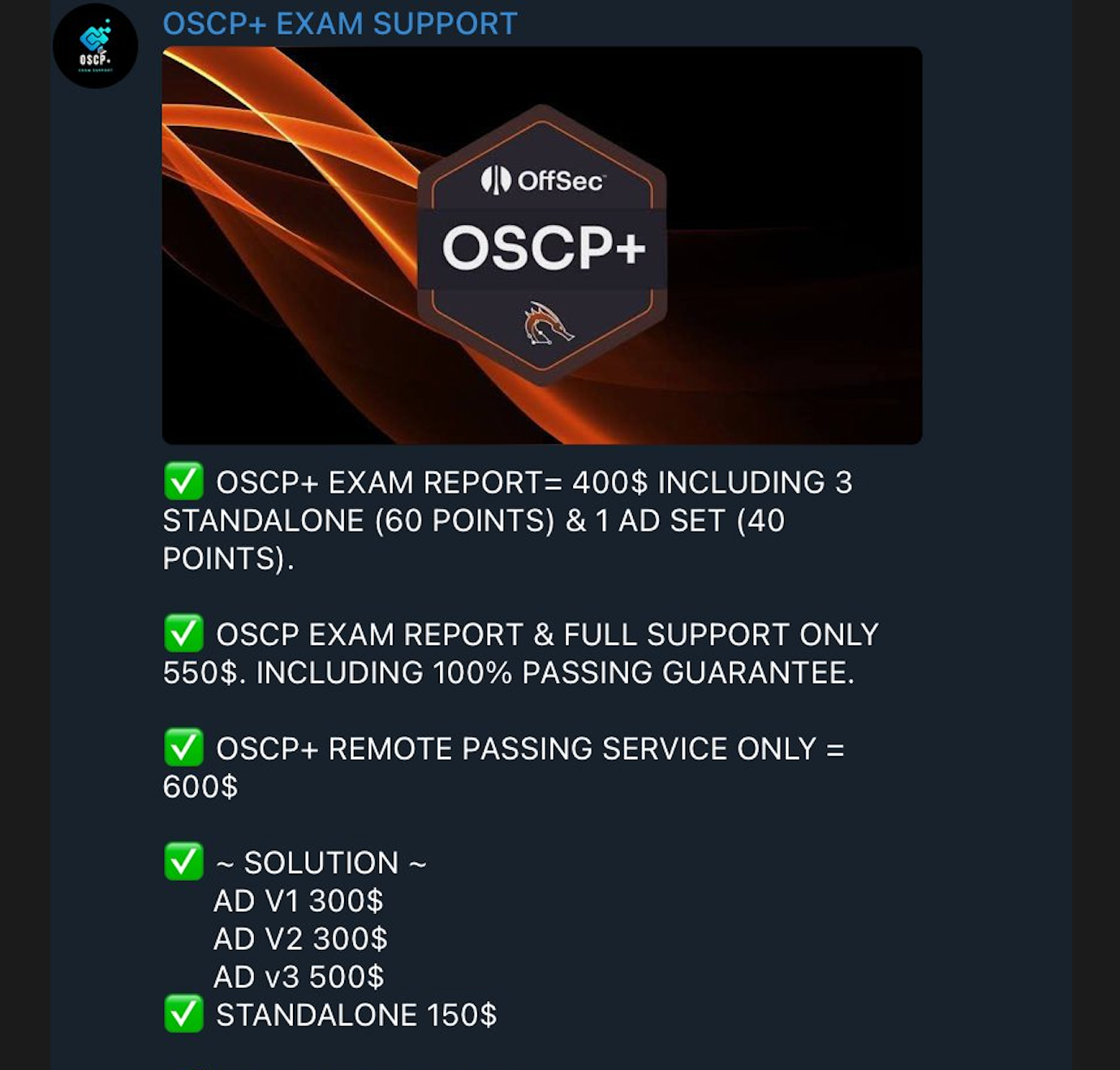

And with Telegram groups and websites openly selling OSCP+ exam reports, "remote passing services" with a 100% guarantee, and individual standalone solutions for a fraction of the course fee, it's not hard to see why. The cert that's supposed to prove you can hack things can itself be hacked for a third of the price.

The pipeline is broken at both ends. ISC2's 2024 data shows only 17% of employers are actively seeking entry-level recruits, and demand for junior roles fell 8% in two years. The certs are being devalued at the same time as the jobs they're supposed to unlock are disappearing.

Meanwhile, the training ecosystem is drunk on money. OffSec has been sold twice to private equity firms and is firmly in "milk the cow" mode. The course went from roughly $800 in 2013 to $1,749 in 2025, exam retakes from $60 to $249, and then the OSCP+ rebrand turned a lifetime certification into a three-year expiry with a $799 renewal exam. The LinkedIn announcement for their Klarna partnership reads "You were made to Try Harder. Now you can Pay Smarter." The Reddit community renamed it "Pay Harder."

As Justin Elze called it, OffSec is "moving into corporate land and away from what made it great."

TryHackMe went the other direction, offering around 80% of their content for free, then quietly launched NoScope, a commercial AI pentester whose marketing originally referenced millions of user journeys and years of data (TryHackMe denies this). If something is free, you are the product.

Good training still exists though. CRTO from Zero Point Security is genuinely excellent material at a fraction of the price, with no course expiry, free exam retakes, and purchasing power parity pricing. TCM Security did the same thing with PNPT, affordable courses and a founder who started by posting free content on YouTube. The alternatives are there, and more accessible than ever. For now.

While writing this post, Fortra acquired Zero Point Security. Educate 360 acquired TCM Security last year, a Morgan Stanley Capital Partners portfolio company. Heath Adams, the founder and face of the brand, has since left. Both are now corporate-owned. RastaMouse says he sold because the admin was consuming the time he wanted to spend writing training, and that the courses stay: "My vision for Zero-Point Security has always been to provide accessible and cost-effective training for 'the little guy'. Although we will be working on new training programs for the business market, we have no intention of altering the availability and pricing strategies of existing courses like RTO." OffSec said the same thing when they took Spectrum Equity's money in 2018: "not much will be changing on our side." Either way, the window for cheap, founder-priced offensive security training is closing fast.

Even setting aside the cheating, these certs might be on borrowed time anyway. The NCSC defines penetration testing as 'attempting to breach some or all of that system's security, using the same tools and techniques as an adversary might.' In practice, those tools now include public exploit databases, automated reconnaissance frameworks, and increasingly capable AI agents. Yet most certification exams still lock candidates in proctored environments that ban internet access, restrict tooling, and forbid AI assistance; testing for a version of the job that no longer exists.

Keith Hoodlet walked away from OSWE entirely, calling it 'a profound waste of time to study for an exam that only allows for manual, human-driven efforts.' The Replit CEO argues that knowing how to code is becoming a disadvantage because 'coders get lost in the details' while problem-solvers ship faster. The same logic applies here: does it matter what you've memorised when the adversary is using every tool available?

What Now

This isn't a solutions piece. The people who need to hear them aren't reading this blog; they're three levels up, repeating "it is what it is" at every risk review, interested only in maintaining the status quo because the system that produces passing reports is the same system that keeps them comfortable, their projects on track, and their performance-based bonuses intact. They're not ignorant. They're dependent.

The solutions exist, they're just not profitable: threat modelling, attack surface mapping, and scoping by people that know what they're doing, talking to the people who built and run the system because they understand it better than any documentation will, testing in an isolated lab where you can actually break things, findings that come with a full PoC or at least a plausible exploit path (as albinowax noted), source code access for review whenever possible, exploit chaining with a business-risk narrative; post-engagement processes that don't end with a PDF in a SharePoint folder.

There's more to this story. The full technical walkthrough of each CVE, from the appliance image download to the responsible disclosure. A week of pentesting inside OpenShift Dev Spaces and the Metasploit module that came out of it. Whether I write them up depends on whether anyone's still reading. Let me know.

Compliance Corp is a composite. It does not describe a specific organisation or engagement. ↩︎